|

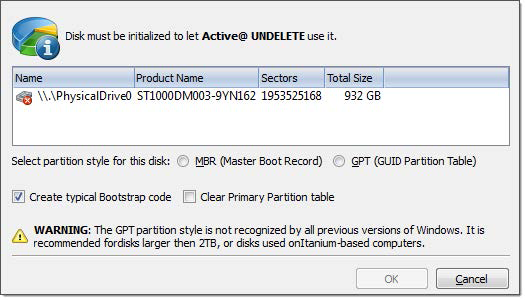

It’s not a major choice, but when you’re asked and the specific computer can use the newer standard, GPT is almost always the right way to go.Here is what you should do for your 1TB Drive with a Single Partition to work on Ubuntu and Windows: Ubuntu allows writing files to NTFS out of the box. So that lost boot record data can be rebuilt. Because GPT spreads crucial partition table information around the drive, unlike MBR, it can recover from drive corruption that affects only one partition.

What Partition Style To Use How To Mount AnPartition styles, also sometimes called partition schemes, is a term that refers. It was designed for use with the new Unified Extensible Firmware Interface (UEFI) which is the new motherboard firmware that is about to replace the long. The GUID Partition Table (GPT) will offer many advantages over the old partitioning style and may eventually make multibooting a bit easier. Mounting an Amazon S3 bucket using S3FS is a simple process: by following the steps below, you should be able to start experimenting with using Amazon S3 as a drive on your computer immediately.There is a new partitioning Style poised to take over from the traditional MBR partitioning scheme. In this section, we’ll show you how to mount an Amazon S3 file system step by step. What are the benefits of hidden partition pls or just using files on sd card? I haven't seen much info ?about the. Could I even access that? Or copy it? how would I wipe that and start a new one How Would I format wipe the partition. If using hidden partition emuNAND can it be copyed? if card corrupted. The main abstraction Spark provides is a resilient distributed dataset (RDD), which is a collection of elements partitioned across the nodes of the. At a high level, every Spark application consists of a driver program that runs the user's main function and executes various parallel operations on a cluster. Here’s a example to convert Non partitioned s3 access logs to partitioned s3 access logs on EMR: What are the benefits of hidden partition pls or just using files on sd card? I haven't seen much info ?about the. Could I even access that? Or copy it? how would I wipe that and start a new one How Would I format wipe the partition. If using hidden partition emuNAND can it be copyed? if card corrupted. The main abstraction Spark provides is a resilient distributed dataset (RDD), which is a collection of elements partitioned across the nodes of the. At a high level, every Spark application consists of a driver program that runs the user's main function and executes various parallel operations on a cluster. Here’s a example to convert Non partitioned s3 access logs to partitioned s3 access logs on EMR:

There is no way to make it faster. During execution, we noticed it took hours and hours to perform the copy. You can read about how Spark places executors here. Topic-partitions are ideally uniformly distributed across Kafka brokers. Each task in Spark reads data from a particular partition of a Kafka topic, known as a topic-partition. A “real” file system the major one. It does have a few disadvantages vs. parquet, spark & s3 amazon s3 (simple storage services) is an object storage solution that is relatively cheap to use. Set hive.exec.dynamic.partition=true set hive.exec.dynamic.partition.mode=nonstrict Now if you run the insert query, it will create all required dynamic partitions and insert correct data into each partition. This will allow us to create dynamic partitions in the table without any static partition. Partition pruning is a performance optimization that limits the number of files and partitions that Spark reads when querying. We use partnerId and hashedExternalId (unique in the partner. Next, we use PartnerPartitionProfile to proved Spark the criteria to custom-partition the RDD. Spark uses the default HashPartitioner to derive the partitioning scheme and also how many partitions to create. Using the AWS API, the Scout2 Python scripts fetch CloudTrail, EC2, IAM, RDS, and S3, configuration data Prowler, An AWS CIS Benchmark Tool Prowler follows guidelines of the CIS Amazon Web Services Foundations Benchmark and additional checks. This step is also time-consuming, as this will require us to update the metastore database with the new information. This is done using Hive’s internal alterTable API call. Once the partition information is available, Spark will update the metastore with the partition information. This has to do with the parallel reading and writing of DataFrame partitions that Spark does. ORC and Parquet “files” are usually folders (hence “file” is a bit of misnomer). Since this implementation used Amazon EC2 instances, storing the data in Amazon’s cloud makes for efficient read and write operations. All of the partitions are stored in Amazon S3 so they can be accessible to all machines in the Spark cluster. All datasets based on files can be partitioned. ERR_SPARK_SQL_LEGACY_UNION_SUPPORT: Your current Spark version doesn't support UNION clause but only supports UNION ALL, which does not remove duplicates. As a matter fact, I consider this post to have a high noise-to-signal ratio, meaning that we did a lot of discussion on what secondary sorting is and. Hopefully, we can see the usefulness of secondary sorting as well as the ease of implementing it in Spark. How to implement secondary sorting in Spark. S3 only knows two things: buckets and objects (inside buckets). SQL PARTITION BY clause overview. Summary: in this tutorial, you will learn how to use the SQL PARTITION BY clause to change how the window function calculates the result. ORC format was introduced in Hive version 0.11 to use and retain the type information from the table definition. Optimized Row Columnar (ORC) file format is a highly efficient columnar format to store Hive data with more than 1,000 columns and improve performance. Added partition starts from instead of 0. aws s3 ls -summarize -human-readable -recursive s3://bucket-name/directory Accessing the AWS CLI via your Spark runtime isn’t always the easiest, so you can also use some org.apache.hadoop code. The PARTITION BY clause divides a query’s result set into partitions. In CDH 5.11 and later, optimizations change the underlying logic so that partitions are loaded in parallel. Prior to CDH 5.11, performance of Hive queries that performed dynamic partitioning on S3 was diminished because partitions were loaded into the target table one at a time. It is useful for bulk creating or updating partitions. Option("truncate", false). Import scala.concurrent.duration._ val q = records. Offset") import org.apache.spark.sql.streaming. A total number of partitions in spark are configurable. Every node over cluster contains more than one spark partition. Tuples which are in the same partition in spark are guaranteed to be on the same machine. Spark Partition – Properties of Spark Partitioning. Hive> select * from call_center_s3 where cc_rec_start_date='' Note this is the date range for table residing against HDFSMatlab table remove row names Instacart shopper greeting message Each worker communicates with the other workers. The master periodically instructs the workers to save the state of their partitions to persistent storage. The workers all reload their partition state from the most recent available checkpoint. What Partition Style To Use Download Scala FromWhat Partition Style To Use Zip The DownloadedStep3: Start the spark-shell. Download scala from scala lang.org Install scala Set SCALA_HOME environment variable & set the PATH variable to the bin directory of scala. Unzip the downloaded file to any location in your system. Download the current version of spark from the official website. Step 1: Download & unzip spark.

0 Comments

Leave a Reply. |

AuthorJames ArchivesCategories |

RSS Feed

RSS Feed